2.3.1 Downsampling:

Why change the sample frequency?

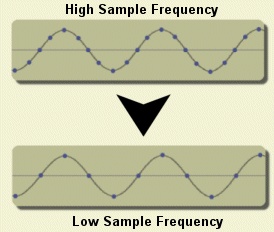

Sampling sound data is much like filming video. A video camera

must take pictures at a high frequency in order to produce a smooth

video. Like a video, a sound file contains many snapshots of sound

that, when played back, produce a sound exactly like the original

signal. These "sound snapshots" are called samples. The higher the

sample frequency of a digital sound file, the closer the reconstructed

signal is to the actual recorded signal.

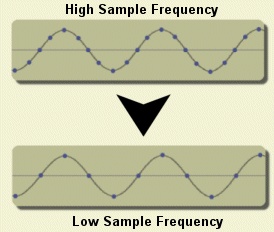

Higher sample frequencies mean bigger files. The speech file used in

our example

has a sample frequency of 16 kHz. Our recognizer can accept speech

files with virtually any sample frequency; thus you may want to

experiment with

downsampling

to better understand the tradeoff between performance and

sample frequency. See

Section 2.3.2

to learn more about the downsampling process.

Most large-scale speech applications in telephony, such as cellular

phone-based applications, use speech sampled at 8 kHz.

Most workstation based applications, such as dictation, use a

16 kHz frequency.

Click here

for more information about the selection of an appropriate

sample frequency.

The impact of sample frequency on performance has been studied

extensively over the years. We have spent some time recently

characterizing performance differences between 8 kHz and 16 kHz

for a speech in noise application. See

Aurora Evaluations

for details, including a comprehensive document describing the

performance of the

baseline system

used in this evaluation.

|

|

|